Conversation overview

Alpha

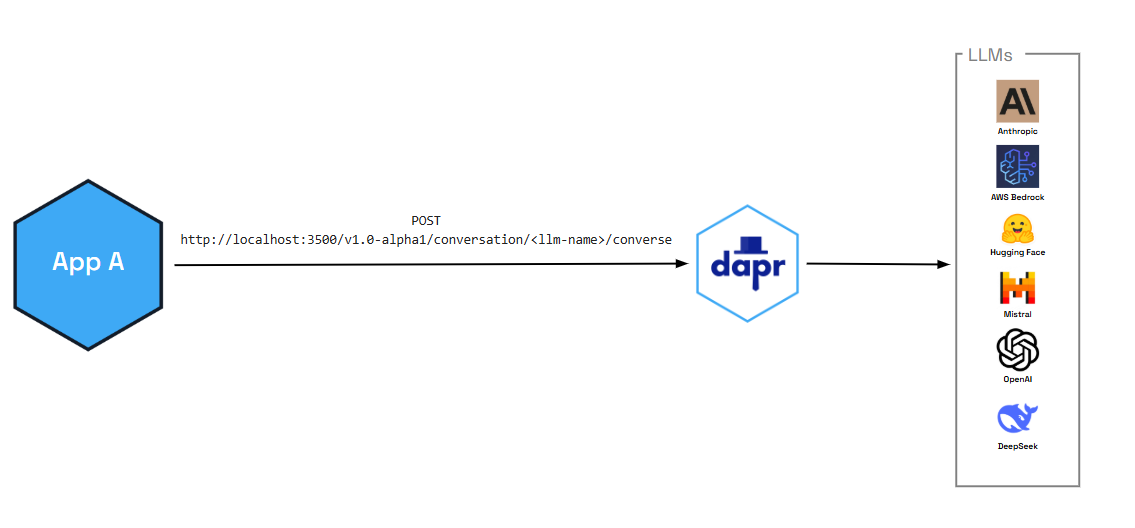

The conversation API is currently in alpha.Dapr’s conversation API reduces the complexity of securely and reliably interacting with Large Language Models (LLM) at scale. Whether you’re a developer who doesn’t have the necessary native SDKs or a polyglot shop who just wants to focus on the prompt aspects of LLM interactions, the conversation API provides one consistent API entry point to talk to underlying LLM providers.

In addition to enabling critical performance and security functionality (like caching and PII scrubbing), the conversation API also provides:

- Tool calling capabilities that allow LLMs to interact with external functions and APIs, enabling more sophisticated AI applications

- OpenAI-compatible interface for seamless integration with existing AI workflows and tools

You can also pair the conversation API with Dapr functionalities, like:

- Resiliency policies including circuit breakers to handle repeated errors, timeouts to safeguards from slow responses, and retries for temporary network failures

- Observability with metrics and distributed tracing using OpenTelemetry and Zipkin

- Middleware to authenticate requests to and from the LLM

Features

The following features are out-of-the-box for all the supported conversation components.

Caching

The Conversation API supports two kinds of caching:

- Prompt caching: Some LLM providers cache prompt prefixes on their side to speed up and reduce cost of repeated prompts. You enable this per request via the API using the

promptCacheRetentionparameter (for example,24hfor OpenAI). See the Conversation API reference for request-level options. Support depends on the provider. - Response caching: Conversation components can cache full LLM responses in the sidecar. When you set the component metadata field

responseCacheTTL(for example,10m), Dapr caches responses keyed by the request (prompt and options). Repeated identical requests are served from the cache without calling the LLM, reducing latency and cost. This cache is in-memory and per sidecar. Configure this in your conversation component spec.

Response formatting

You can request structured output from the model by passing a responseFormat (JSON Schema) in the request. Supported by Deepseek, Google AI, Hugging Face, OpenAI, and Anthropic. See the Conversation API reference.

Usage metrics

Responses can include token usage (promptTokens, completionTokens, totalTokens) for the conversation. See Response content in the API reference.

Personally identifiable information (PII) obfuscation

The PII obfuscation feature identifies and removes any form of sensitive user information from a conversation response. Simply enable PII obfuscation on input and output data to protect your privacy and scrub sensitive details that could be used to identify an individual.

The PII scrubber obfuscates the following user information:

- Phone number

- Email address

- IP address

- Street address

- Credit cards

- Social Security number

- ISBN

- Media Access Control (MAC) address

- Secure Hash Algorithm 1 (SHA-1) hex

- SHA-256 hex

- MD5 hex

Tool calling support

The conversation API supports advanced tool calling capabilities that allow LLMs to interact with external functions and APIs. This enables you to build sophisticated AI applications that can:

- Execute custom functions based on user requests

- Integrate with external services and databases

- Provide dynamic, context-aware responses

- Create multi-step workflows and automation

Tool calling follows OpenAI’s function calling format, making it easy to integrate with existing AI development workflows and tools.

Demo

Watch the demo presented during Diagrid’s Dapr v1.15 celebration to see how the conversation API works using the .NET SDK.

Try out conversation API

Quickstarts and tutorials

Want to put the Dapr conversation API to the test? Walk through the following quickstart and tutorials to see it in action:

| Quickstart/tutorial | Description |

|---|---|

| Conversation quickstart | Learn how to interact with Large Language Models (LLMs) using the conversation API. |

Start using the conversation API directly in your app

Want to skip the quickstarts? Not a problem. You can try out the conversation building block directly in your application. After Dapr is installed, you can begin using the conversation API starting with the how-to guide.